Introduction

Sacling kubernetes cluster is crucial for optimizing both cost & performance in modern cloud infrastructure. how traditional scaling approaches often lead to inefficient resource utilization, slow scaling response times, and complex management overhead.

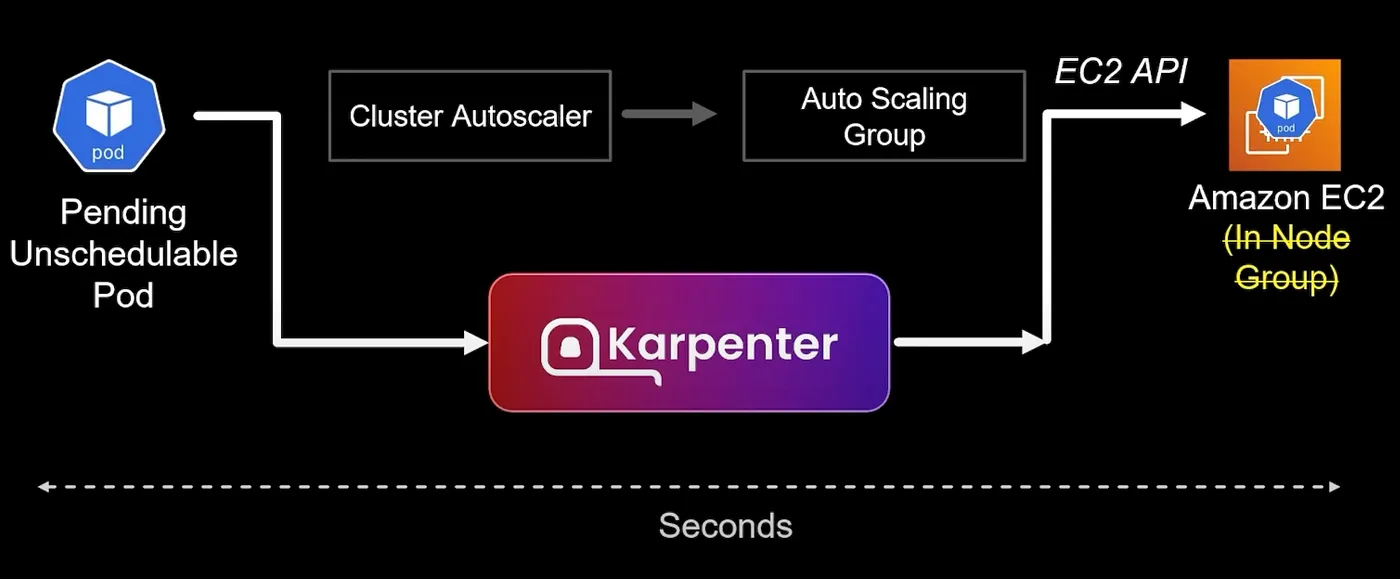

If you’ve ever struggled with Kubernetes scaling (and who hasn’t?), you’ll appreciate how Karpenter simplifies this process by directly provisioning EC2 instances based on workload requirements. It eliminates the need for predefined node groups and reduces scaling latency from minutes to seconds. Trust me, when you’re dealing with production traffic spikes, those minutes matter!

TL;DR

-

Karpenter is a Kubernetes-native autoscaler that provisions nodes in seconds rather than minutes

-

Unlike Cluster Autoscaler, Karpenter doesn’t require node groups and can automatically select the best instance types for your workloads

-

You’ll learn how to configure NodePools and EC2NodeClass to optimize for different workload types, architectures, and cost models

Understanding scaling challenges

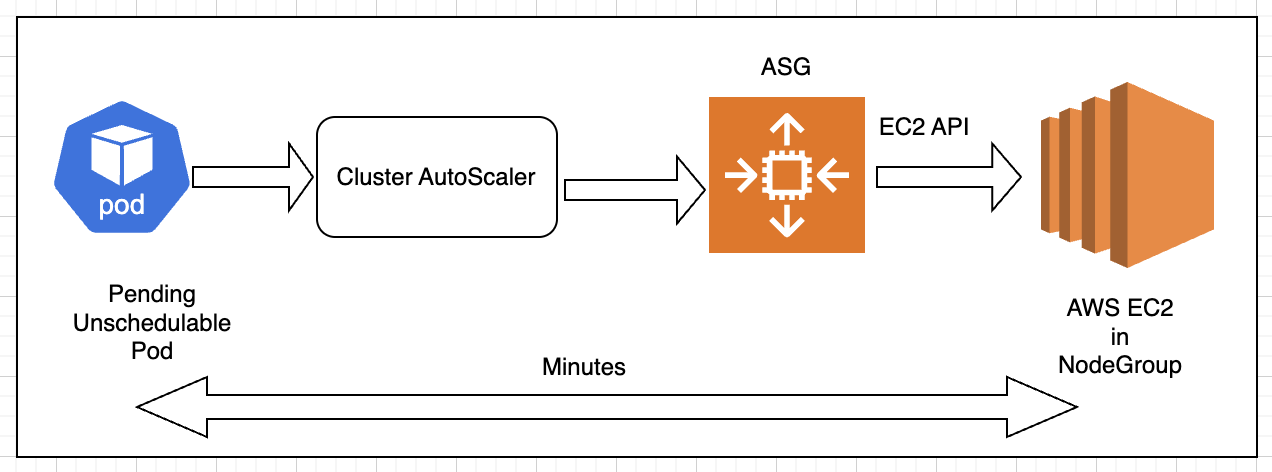

Before diving into Karpenter, let’s understand the traditional Kubernetes scaling flow:

-

Your application runs in pods on worker nodes (EC2 instances)

-

As traffic increases, Horizontal Pod Autoscaler (HPA) creates additional pods

-

When existing nodes can’t accommodate new pods, they remain in

Pendingstate -

A node autoscaler detects these pending pods and provisions new nodes

-

The scheduler then places the pending pods on the newly provisioned nodes

This sounds straightforward, but I’ve encountered numerous limitations with traditional node autoscalers like Cluster Autoscaler in real-world scenarios:

Cluster Autoscaler requires you to:

-

Create node groups with predefined instance types

-

Configure Auto Scaling Groups (ASGs) for each node group

-

Manually update node groups when new instance types are needed

This creates several challenges

-

Node provision latency: The multi-step process (Cluster Autoscaler → ASG → EC2 API) takes minutes.

-

Node group management overhead: You must create and manage separate node groups for different instance types. Managing a cluster with ,multiple node groups with different configs is a nightmare.

-

Limited instance type flexibility: Each node group can only contain compatible instance types. When AWS released new, more cost-effective instance types, updating all our node groups was a tedious process.

-

Resource utilization inefficiencies: Predefined node groups often lead to underutilized resources.

For example, if an application suddenly needs GPU instances, but your node groups only contain general-purpose instances, the GPU workload will remain pending until you manually create a new GPU node group.

What is Karpenter?

Karpenter is an open-source node autoscaler for Kubernetes that was created by AWS and donated to the CNCF. It’s now a CNCF project under SIG Autoscaling and has been adopted by multiple cloud providers, including GCP & Azure.

Karpenter takes a fundamentally different approach to node scaling:

-

Direct EC2 provisioning: Karpenter bypasses node groups and ASGs, directly calling EC2 APIs

-

Workload-driven scaling: It provisions nodes based on pod requirements rather than predefined node groups

-

Automatic instance selection: Karpenter can choose from all available instance types to find the best match

-

Kubernetes-native: It uses standard Kubernetes scheduling constraints and YAML configuration

Beyond just scaling, Karpenter also:

-

Optimizes cost through consolidation and right-sizing

-

Supports diverse workloads, including ML and generative AI

-

Facilitates cluster upgrades and patching

-

Integrates deeply with Kubernetes scheduling